RPU -- A Reasoning Processing Unit

By Matthew Joseph Adiletta, Gu-Yeon Wei and David Brooks

Harvard University

Abstract

Large language model (LLM) inference performance is increasingly bottlenecked by the memory wall. While GPUs continue to scale raw compute throughput, they struggle to deliver scalable performance for memory bandwidth bound workloads. This challenge is amplified by emerging reasoning LLM applications, where long output sequences, low arithmetic intensity, and tight latency constraints demand significantly higher memory bandwidth. As a result, system utilization drops and energy per inference rises, highlighting the need for an optimized system architecture for scalable memory bandwidth.

Large language model (LLM) inference performance is increasingly bottlenecked by the memory wall. While GPUs continue to scale raw compute throughput, they struggle to deliver scalable performance for memory bandwidth bound workloads. This challenge is amplified by emerging reasoning LLM applications, where long output sequences, low arithmetic intensity, and tight latency constraints demand significantly higher memory bandwidth. As a result, system utilization drops and energy per inference rises, highlighting the need for an optimized system architecture for scalable memory bandwidth.

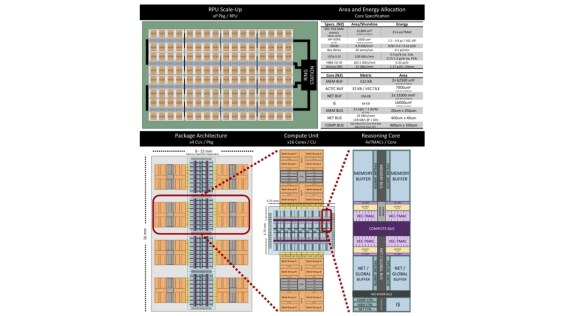

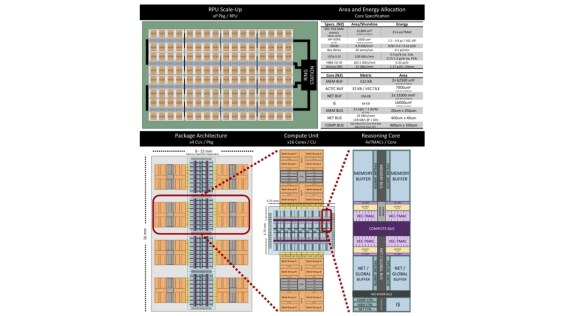

To address these challenges we present the Reasoning Processing Unit (RPU), a chiplet-based architecture designed to address the challenges of the modern memory wall. RPU introduces: (1) A Capacity-Optimized High-Bandwidth Memory (HBM-CO) that trades capacity for lower energy and cost; (2) a scalable chiplet architecture featuring a bandwidth-first power and area provisioning design; and (3) a decoupled microarchitecture that separates memory, compute, and communication pipelines to sustain high bandwidth utilization. Simulation results show that RPU performs up to 45.3x lower latency and 18.6x higher throughput over an H100 system at ISO-TDP on Llama3-405B.

To read the full article, click here

Related Chiplet

- DPIQ Tx PICs

- IMDD Tx PICs

- Near-Packaged Optics (NPO) Chiplet Solution

- High Performance Droplet

- Interconnect Chiplet

Related Technical Papers

- Performance Implications of Multi-Chiplet Neural Processing Units on Autonomous Driving Perception

- Leveraging 3D Technologies for Hardware Security: Opportunities and Challenges

- Material Needs and Measurement Challenges for Advanced Semiconductor Packaging: Understanding the Soft Side of Science

- Optimizing Inter-chip Coupler Link Placement for Modular and Chiplet Quantum Systems

Latest Technical Papers

- Predictive Software Scheduling as an Early-Warning Hint Layer for Optical Engine Thermal Drift in Heterogeneous SoIC Packaging

- Micro-Transfer Printing on Silicon Photonics: Tutorial, Recent Progress and Outlook

- CCD-Level and Load-Aware Thread Orchestration for In-Memory Vector ANNS on Multi-Core CPUs

- A Review of Multiscale Thermal Modeling in Heterogeneous 3D ICs

- Spying Across Chiplets: Side-Channel Attacks in 2.5/3D Integrated Systems