CHICO-Agent: An LLM Agent for the Cross-layer Optimization of 2.5D and 3D Chiplet-based Systems

By Qihang Wu, Aman Arora, and Vidya A. Chhabria

Arizona State University

Abstract

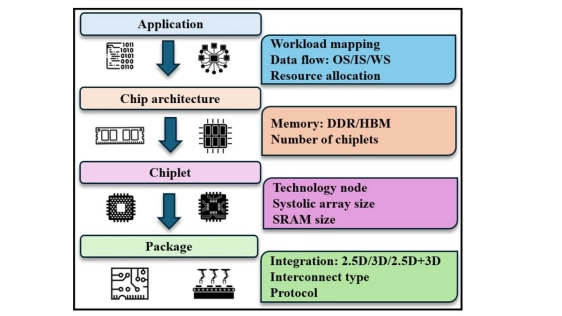

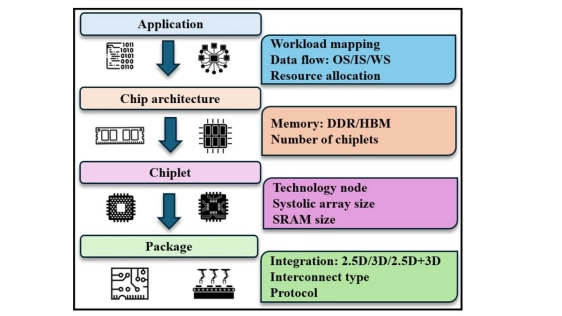

The rapid growth of large language models (LLMs) and AI workloads has pushed monolithic silicon to its reticle and economic limits, accelerating the adoption of 2.5D/3D chiplet systems. However, these systems increase design complexity by requiring co-design across multiple levels of the computing stack, including application, architecture, chip, and package. The resulting design space is highly combinatorial, with trade-offs among latency, energy, area, and cost. To address this challenge, we propose CHICO-Agent, an LLM-driven optimization framework for 2.5D/3D chiplet-based systems. CHICO-Agent maintains a persistent knowledge base to capture parameter-outcome trends and coordinates exploration through an admin-field multi-agent workflow. Compared with a simulated-annealing baseline, CHICO-Agent finds lower-cost configurations and provides an interpretable audit trail for designers.

The rapid growth of large language models (LLMs) and AI workloads has pushed monolithic silicon to its reticle and economic limits, accelerating the adoption of 2.5D/3D chiplet systems. However, these systems increase design complexity by requiring co-design across multiple levels of the computing stack, including application, architecture, chip, and package. The resulting design space is highly combinatorial, with trade-offs among latency, energy, area, and cost. To address this challenge, we propose CHICO-Agent, an LLM-driven optimization framework for 2.5D/3D chiplet-based systems. CHICO-Agent maintains a persistent knowledge base to capture parameter-outcome trends and coordinates exploration through an admin-field multi-agent workflow. Compared with a simulated-annealing baseline, CHICO-Agent finds lower-cost configurations and provides an interpretable audit trail for designers.

To read the full article, click here

Related Chiplet

- DPIQ Tx PICs

- IMDD Tx PICs

- Near-Packaged Optics (NPO) Chiplet Solution

- High Performance Droplet

- Interconnect Chiplet

Related Technical Papers

- ChipLight: Cross-Layer Optimization of Chiplet Design with Optical Interconnects for LLM Training

- Exploring the Efficiency of 3D-Stacked AI Chip Architecture for LLM Inference with Voxel

- Thermal Implications of Non-Uniform Power in BSPDN-Enabled 2.5D/3D Chiplet-based Systems-in-Package using Nanosheet Technology

- 3D-ICE 4.0: Accurate and efficient thermal modeling for 2.5D/3D heterogeneous chiplet systems

Latest Technical Papers

- Transient Multiscale Workflow for Thermal Analysis of 3DHI Chip Stack

- Dispersion-Engineered Terahertz Silicon Interconnects Enabling Terabit-Scale Data Links

- Design-Oriented Modeling of TSV Substrate Noise Coupling to Ring VCOs

- CLIPGen: A Chiplet Link IP Modeling and Generation Framework for 2.5D Architecture Exploration

- Wafer Warpage of Silicon Interposer in Manufacturing Processes for High Density 2.5D Advanced Packaging: Causes, Measurement, Analysis and Optimization