Manufacturers Anticipate Completion of NVIDIA's HBM3e Verification by 1Q24; HBM4 Expected to Launch in 2026

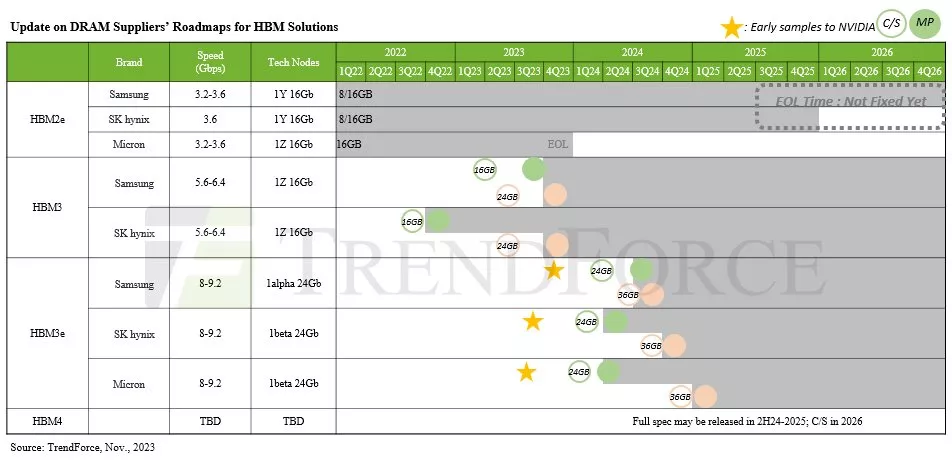

November 29, 2023 -- TrendForce’s latest research into the HBM market indicates that NVIDIA plans to diversify its HBM suppliers for more robust and efficient supply chain management. Samsung’s HBM3 (24GB) is anticipated to complete verification with NVIDIA by December this year. The progress of HBM3e, as outlined in the timeline below, shows that Micron provided its 8hi (24GB) samples to NVIDIA by the end of July, SK hynix in mid-August, and Samsung in early October.

Given the intricacy of the HBM verification process—estimated to take two quarters—TrendForce expects that some manufacturers might learn preliminary HBM3e results by the end of 2023. However, it’s generally anticipated that major manufacturers will have definite results by 1Q24. Notably, the outcomes will influence NVIDIA’s procurement decisions for 2024, as final evaluations are still underway.

NVIDIA continues to dominate the high-end chip market, expanding its lineup of advanced AI chips

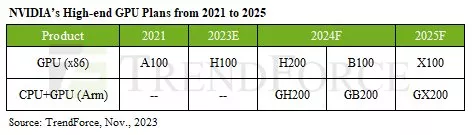

As 2024 rolls around, a number of AI chip suppliers are pushing out their latest product offerings. NVIDIA’s current high-end AI lineup for 2023, which utilizes HBM, includes models like the A100/A800 and H100/H800. In 2024, NVIDIA plans to refine its product portfolio further. New additions will include the H200, using 6 HBM3e chips, and the B100, using 8 HBM3e chips. NVIDIA will also integrate its own Arm-based CPUs and GPUs to launch the GH200 and GB200, enhancing its lineup with more specialized and powerful AI solutions.

Contrastingly, AMD’s 2024 focus is on the MI300 series with HBM3, transitioning to HBM3e for the next-gen MI350. The company is expected to start HBM verification for MI350 in 2H24, with a significant product ramp-up projected for 1Q25.

Intel Habana launched the Gaudi 2 in 2H22, which utilizes 6 HBM2e stacks. Its upcoming Gaudi 3—slated for mid-2024 —is expected to continue using HBM2e but will be upgraded to 8 stacks. TrendForce believes that NVIDIA, with its cutting-edge HBM specifications, product readiness, and strategic timeline, is poised to maintain a leading position in the GPU segment, and, by extension, in the competitive AI chip market.

HBM4 may turn toward customization beyond commodity DRAM

HBM4 is expected to launch in 2026, with enhanced specifications and performance tailored to future products from NVIDIA and other CSPs. Driven by a push toward higher speeds, HBM4 will mark the first use of a 12nm process wafer for its bottommost logic die (base die), to be supplied by foundries. This advancement signifies a collaborative effort between foundries and memory suppliers for each HBM product, reflecting the evolving landscape of high-speed memory technology.

With the push for higher computational performance, HBM4 is set to expand from the current 12-layer (12hi) to 16-layer (16hi) stacks, spurring demand for new hybrid bonding techniques. HBM4 12hi products are set for a 2026 launch, with 16hi models following in 2027.

Finally, TrendForce notes a significant shift toward customization demand in the HBM4 market. Buyers are initiating custom specifications, moving beyond traditional layouts adjacent to the SoC, and exploring options like stacking HBM directly on top of the SoC. While these possibilities are still being evaluated, TrendForce anticipated a more tailored approach for the future of the HBM industry.

This move toward customization, as opposed to the standardized approach of commodity DRAM, is expected to bring about unique design and pricing strategies, marking a departure from traditional frameworks and heralding an era of specialized production in HBM technology.

Related Chiplet

- Interconnect Chiplet

- 12nm EURYTION RFK1 - UCIe SP based Ka-Ku Band Chiplet Transceiver

- Bridglets

- Automotive AI Accelerator

- Direct Chiplet Interface

Related News

- French AI chiplet boost for open source Nvidia accelerator

- NVIDIA Reportedly Overwhelms TSMC with 3 and 4-Nanometer Orders

- How to Build a Better “Blackwell” GPU Than Nvidia Did

- Top 10 IC Design Houses’ Combined Revenue Grows 12% in 2023, NVIDIA Takes Lead for the First Time, Says TrendForce

Latest News

- Cadence Launches Partner Ecosystem to Accelerate Chiplet Time to Market

- Ambiq and Bravechip Cut Smart Ring Costs by 85% with New Edge AI Chiplet

- TI accelerates the shift toward autonomous vehicles with expanded automotive portfolio

- Where co-packaged optics (CPO) technology stands in 2026

- Qualcomm Completes Acquisition of Alphawave Semi