Demand for NVIDIA’s Blackwell Platform Expected to Boost TSMC’s CoWoS Total Capacity by Over 150% in 2024

April 16, 2024 -- NVIDIA’s next-gen Blackwell platform, which includes B-series GPUs and integrates NVIDIA’s own Grace Arm CPU in models such as the GB200, represents a significant development. TrendForce points out that the GB200 and its predecessor, the GH200, both feature a combined CPU+GPU solution, primarily equipped with the NVIDIA Grace CPU and H200 GPU. However, the GH200 accounted for only approximately 5% of NVIDIA’s high-end GPU shipments. The supply chain has high expectations for the GB200, with projections suggesting that its shipments could exceed millions of units by 2025, potentially making up nearly 40 to 50% of NVIDIA’s high-end GPU market.

Although NVIDIA plans to launch products such as the GB200 and B100 in the second half of this year, upstream wafer packaging will need to adopt more complex and high-precision CoWoS-L technology, making the validation and testing process time-consuming. Additionally, more time will be required to optimize the B-series for AI server systems in aspects such as network communication and cooling performance. It is anticipated that the GB200 and B100 products will not see significant production volumes until 4Q24 or 1Q25.

The inclusion of the GB200, B100, and B200 in NVIDIA’s B-series will boost demand for CoWoS capacity, leading TSMC to raise its total CoWoS capacity needs for 2024. The estimated monthly capacity by the end of the year is expected to reach nearly 40K—a staggering 150% year-over-year increase. By 2025, the planned total capacity could nearly double, with NVIDIA's demand expected to make up more than half of this capacity. Other suppliers, such as Amkor and Intel, currently focus on CoWoS-S technology and are primarily targeting NVIDIA’s H-series. With technological breakthroughs expected to be challenging in the short term, expansion plans remain conservative unless these suppliers can secure additional orders beyond NVIDIA, such as self-developed ASIC chips by CSPs, which might lead to a more aggressive expansion strategy.

NVIDIA and AMD’s AI development set to propel HBM3e into mainstream market dominance by the second half of the year

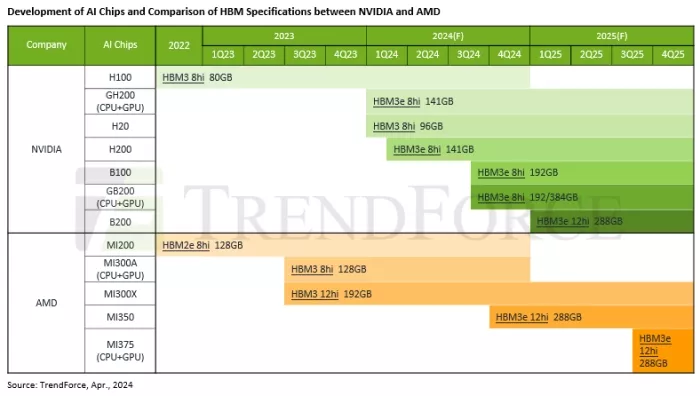

TrendForce has identified three major HBM trends for NVIDIA and AMD’s primary GPU products and their planned specifications beyond 2024: Firstly, the transition from HBM3 to HBM3e is anticipated. NVIDIA is expected to start scaling up shipments of the H200 equipped with HBM3e in the second half of 2024, replacing the H100 as the mainstream. Following this, other models such as the GB200 and B100 will also adopt HBM3e. Meanwhile, AMD plans to launch the new MI350 by the end of the year and may introduce interim models like the MI32x in the meantime to compete with the H200, with both utilizing HBM3e.

Secondly, there will be a continued expansion in HBM capacity to boost the overall computational efficiency and system bandwidth of AI servers. The market currently predominantly uses the NVIDIA H100 with 80GB of HBM, which is expected to increase to between 192GB and 288GB by the end of 2024. AMD’s new GPUs, starting from the MI300A’s 128GB, will also see increases, reaching up to 288GB.

Thirdly, the lineup of GPUs equipped with HBM3e will evolve from 8Hi configurations to 12Hi configurations. NVIDIA’s B100 and GB200 currently feature 8Hi HBM3e with a capacity of 192GB, and by 2025, the B200 model is planned to be equipped with 12Hi HBM3e, achieving 288GB. AMD’s upcoming MI350, to be launched by the end of this year, and the MI375 series, expected in 2025, are both anticipated to come with 12Hi HBM3e, also reaching 288GB.

For more information on reports and market data from TrendForce’s Department of Semiconductor Research, please click here, or email the Sales Department at SR_MI@trendforce.com

For additional insights from TrendForce analysts on the latest tech industry news, trends, and forecasts, please visit https://www.trendforce.com/news/

Related Chiplet

- Interconnect Chiplet

- 12nm EURYTION RFK1 - UCIe SP based Ka-Ku Band Chiplet Transceiver

- Bridglets

- Automotive AI Accelerator

- Direct Chiplet Interface

Related News

- How to Build a Better “Blackwell” GPU Than Nvidia Did

- NVIDIA Reportedly Overwhelms TSMC with 3 and 4-Nanometer Orders

- Blackwell Shipments Imminent, Total CoWoS Capacity Expected to Surge by Over 70% in 2025, Says TrendForce

- Alphawave Semi Launches Industry’s First 3nm UCIe IP with TSMC CoWoS Packaging

Latest News

- Where co-packaged optics (CPO) technology stands in 2026

- Qualcomm Completes Acquisition of Alphawave Semi

- Cadence Tapes Out UCIe IP Solution at 64G Speeds on TSMC N3P Technology

- Avnet ASIC and Bar-Ilan University Launch Innovation Center for Next Generation Chiplets

- SEMIFIVE Strengthens AI ASIC Market Position Through IPO “Targeting Global Markets with Advanced-nodes, Large-Die Designs, and 3D-IC Technologies”